Control Node Configuration

This document describes the tasks to perform after completing package installation on the control node.

- Node A Initial Setup: Configure the basic settings for Node A using the web installer.

- Federation Communication Account Setup: Set up federation accounts used for inter-node communication.

- Node B Initial Setup: Configure the basic settings for Node B to match Node A's configuration.

- Web Console Login: Log in to the web console on both Node A and Node B.

- License Registration: Register licenses on each node.

- Control Node Pair Setup: Configure Node A and Node B as a pair and set a unique identifier for the node pair.

- Network Redundancy: Configure network high availability (HA) using a virtual IP address (VIP).

- Accessing the Web Console via the Representative IP Address: Access the web console using the representative IP address.

Node A Initial Setup

The control node provides a web-based user interface (web console). When an administrator accesses the web console for the first time, the web installer launches to configure initial settings appropriate for the server operating environment.

Accessing the Web Console

-

Open a web browser and navigate to the IP address or FQDN of control node A (e.g.,

https://192.0.2.1,https://c1a.example.com,https://c1a). -

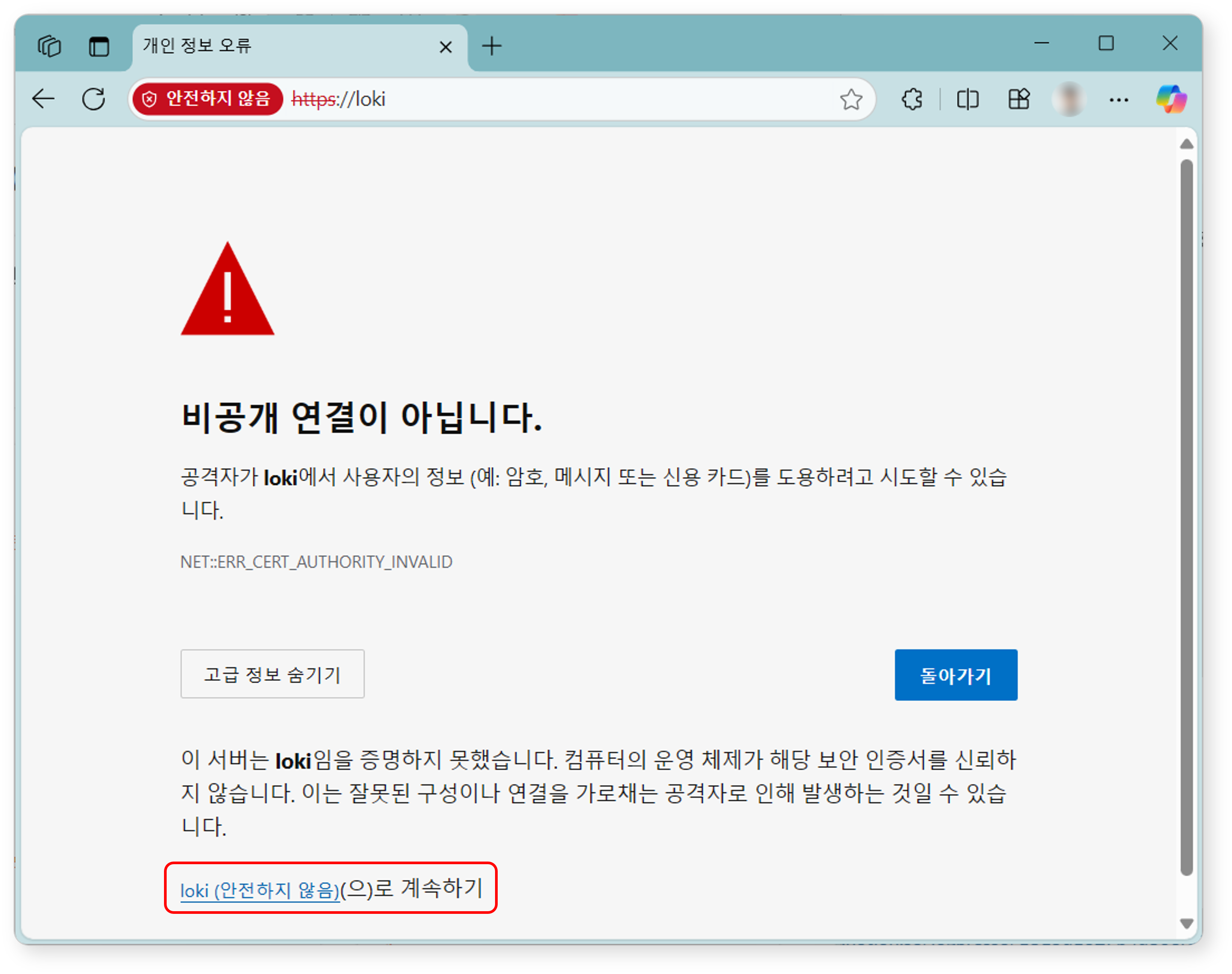

Since a private SSL certificate is used, the web browser will display a security warning message. If there are no concerns, ignore the security warning and proceed with the connection.

Registering the Cluster Administrator

Register the cluster administrator, who is the top-level administrator of Logpresso Sonar.

-

When the Register Cluster Administrator screen appears, enter the required information for the cluster administrator registration.

- Company/Organization Name: The name of the company or organization that will operate Logpresso Sonar

- Administrator Name: The name of the cluster administrator. When the cluster administrator logs in, the administrator name is displayed in the upper-right corner of the web console.

- Language: The language for the cluster administrator. Either Korean or English is automatically selected based on the language of the connected web browser.

- Email: The email address of the cluster administrator

- Administrator Account: The login name of the cluster administrator. Do not use well-known login names such as

root,system,admin,administrator,logpresso, orsonar. - Password/Confirm Password: The login password for the cluster administrator

- Administrator IP Address: Used to restrict cluster administrator access to specific IP addresses. You can specify up to 2 IP addresses.

-

Click the Next button to proceed to the next step.

Certificate and Web Server Address Settings

Replace the certificates and configure the web server address. The web server address is used when employees access the system to write or review justifications for security events.

-

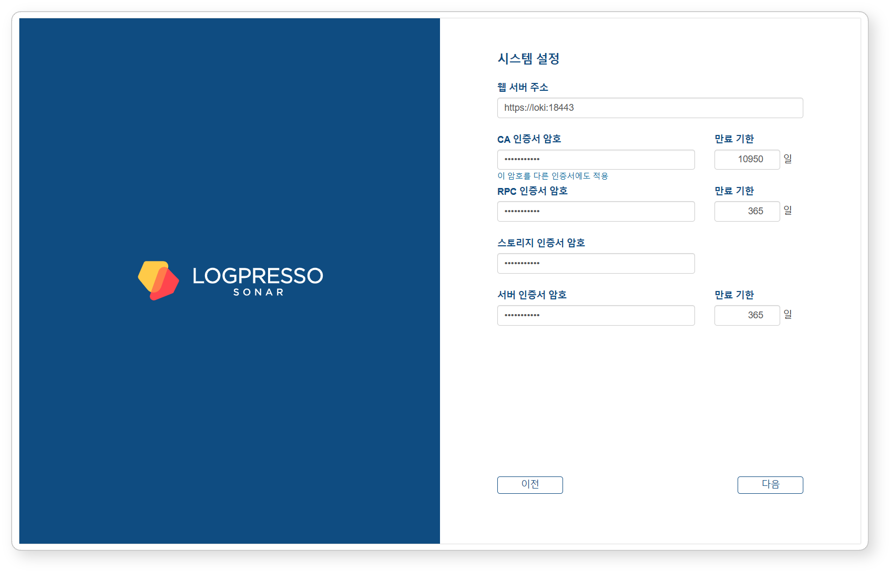

When the System Settings screen appears, enter the required information for system settings.

-

Web Server Address: The web console address. Use the representative IP address or FQDN of the control node pair as follows:

# Representative IP address of the control node: 192.0.2.24 # FQDN: sonar.example.com https://192.0.2.24 or https://sonar.example.com -

CA Certificate Password: CA certificate password (default validity period/maximum: 10,950 days). After entering the password, click Apply this password to other certificates to apply the same password to all other certificates.

-

RPC Certificate Password: Certificate password used for communication with sentries (default validity period/maximum: 365 days)

-

Storage Certificate Password: Password for the certificate that stores the table encryption key. This certificate has no expiration date.

-

Server Certificate Password: Password for the web server certificate used when accessing the Logpresso Sonar web console (default validity period/maximum: 365 days)

-

-

Click the Next button to proceed to the next step.

Also keep the following in mind:

- You can change the Web Server Address on the Justification Template screen in the web console.

- You can view certificate information, reissue certificates, and perform other tasks on the Certificates screen in the web console.

Object Storage Settings

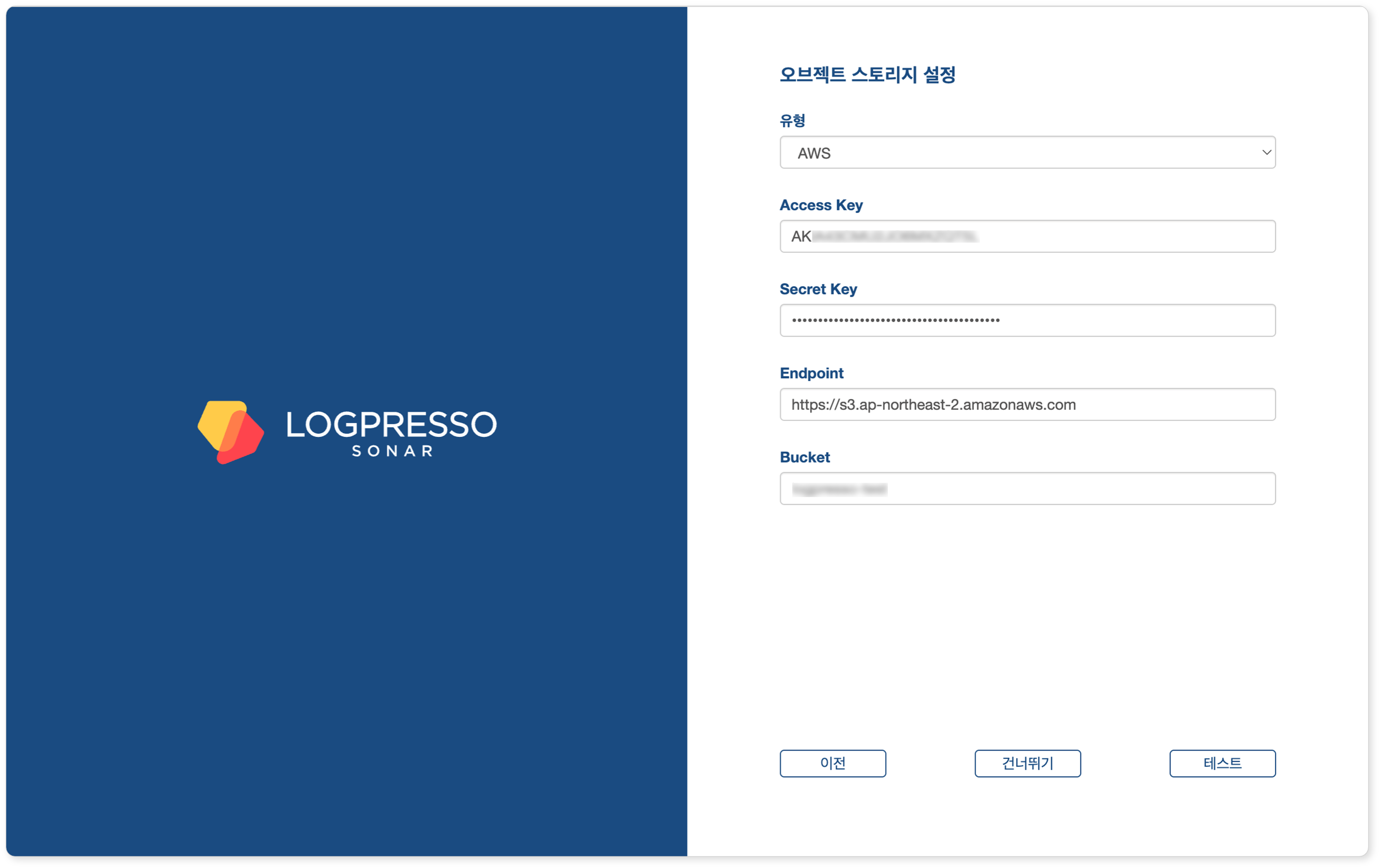

Configure the object storage to be used as Cold tier storage in the data lifecycle feature. If you do not use object storage or want to configure it later, click the Skip button.

-

When the Object Storage Settings screen appears, enter the required information for object storage settings.

- Type: Storage service provider (select one of AWS, S3 Compatible Storage, or kakaocloud; default: AWS)

- Access Key or Authentication Key: Access key for connecting to the storage service

- Secret Key or Secret access Key: Secret access key for connecting to the storage service

- Endpoint: Storage service connection address

- Bucket: Name of the storage bucket

-

After entering all properties, click the Test button. You can proceed to the next step only after the test succeeds.

Also keep the following in mind:

- You can manage data according to its lifecycle by configuring the retention period for each storage tier on the Lifecycle tab and configuring rollover on the Storage tab in the Cluster screen of the web console.

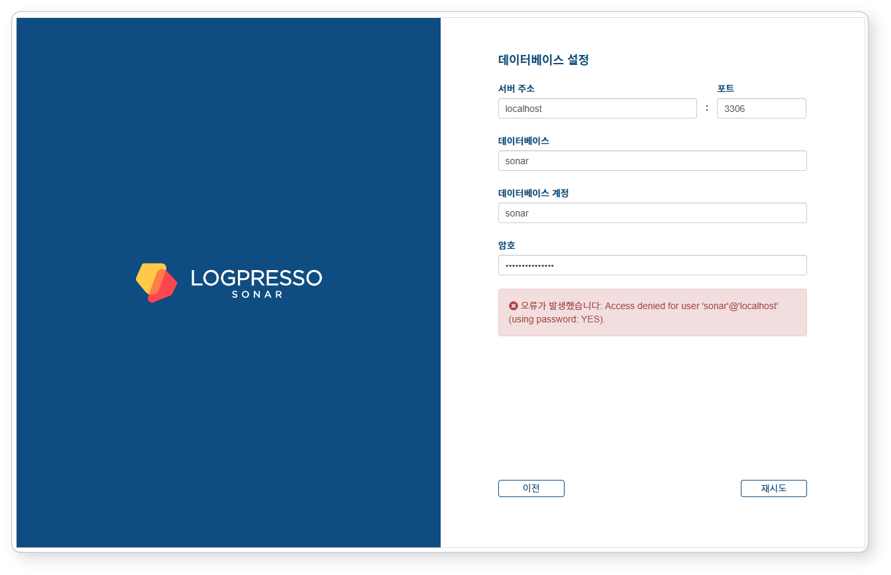

Database Settings

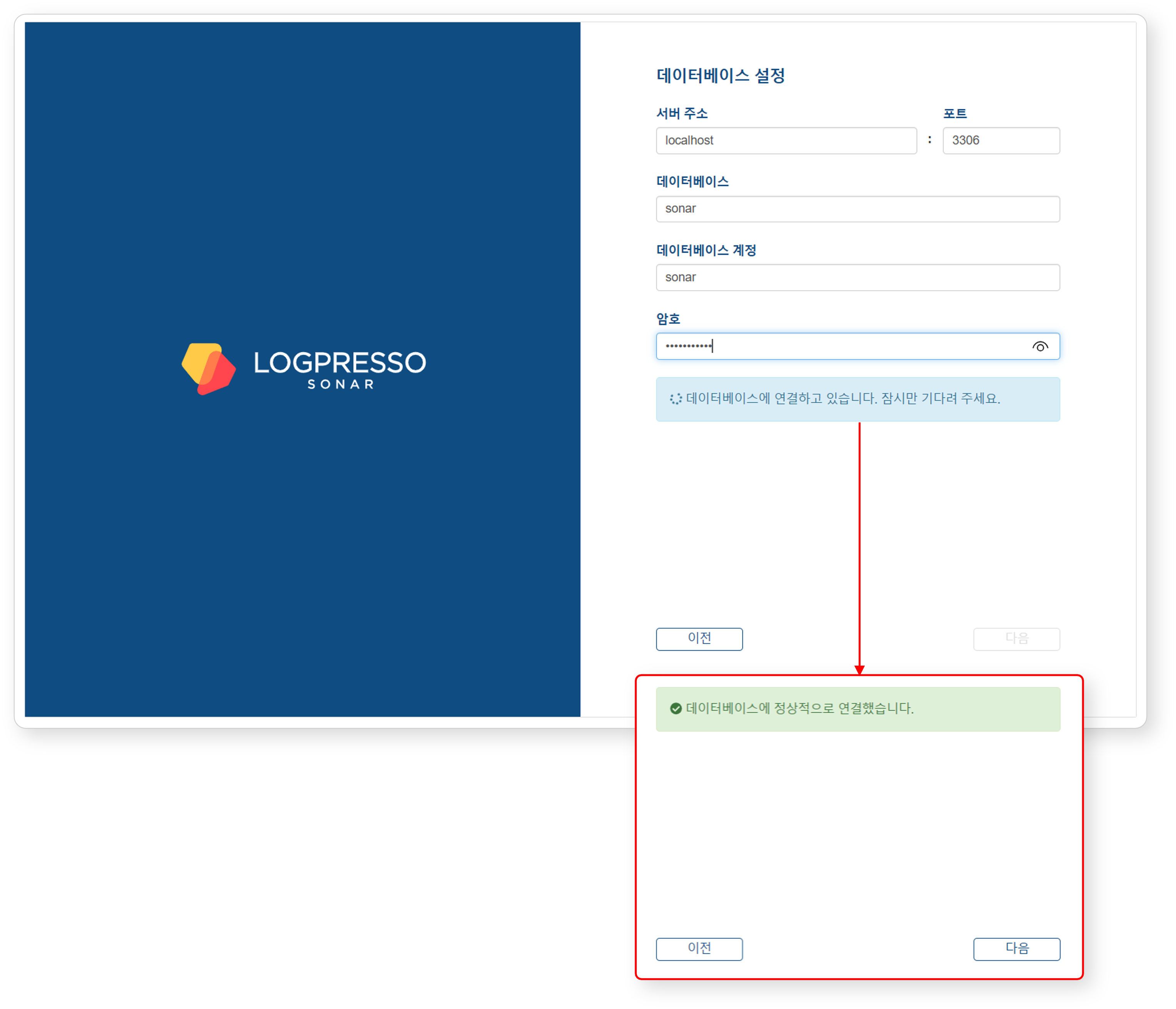

Now connect the MariaDB database to Logpresso Sonar.

-

When the Database Settings screen appears, enter the database settings and click the Next button.

- Server Address: Address of the MariaDB server (default:

localhost) - Port: Port number of the MariaDB server (default:

3306) - Database: Database name on the MariaDB server (default:

sonar) - Database Account: Dedicated database account for Logpresso Sonar (default:

sonar) - Password: Password for the dedicated Logpresso Sonar database account

- Server Address: Address of the MariaDB server (default:

-

Once all settings are entered, the system automatically attempts to connect to the database.

- If the connection succeeds, the message Successfully connected to the database. is displayed and the Next button is enabled.

- If the connection fails, an error message appears. Check the error details, correct the settings, and click the Retry button.

-

Once the database settings are complete, the configuration required to run Logpresso Sonar is applied automatically. All settings configured in the web installer are synchronized with Node B through the Galera Cluster.

-

After the settings are applied, the certificates are replaced and the web console reconnects to the login screen. Since self-signed certificates are used, the web browser may display a security warning. Ignore the warning and proceed.

Summary

After completing these steps, the following tasks have been performed on Node A:

- Created the database and tables in MariaDB and injected default data

- Synchronized the database layer with Node B via the Galera Cluster

- Configured the application layer settings

- Created the JDBC profile required for database connection

- Configured the authentication service required for the cluster administrator to access the web console

The basic setup for Node A is complete, but the application layer settings for Node B still need to be configured. This will be done in the next steps.

If you are operating in standalone mode without configuring a cluster, proceed to the License Registration step.

Federation Communication Account Setup

For federation communication between nodes, you must set passwords for the Logpresso shell account and the federation account on both Node A and Node B. This process is performed in the Logpresso shell. The Logpresso shell is the CLI for the Logpresso engine and uses accounts separate from the web console login accounts (e.g., cluster administrator).

Accessing the Logpresso Shell

You must access the Logpresso shell on control node A and B separately and change the default password. It is recommended to register SSH keys as a precaution against password loss.

Changing the Default Password

-

Run the following command in the terminal to access the Logpresso shell. The port number may differ depending on the

SSH_PORTsetting in thelogpresso.conffile (default: 7022).ssh -p 7022 root@localhost -

When the password prompt appears, enter the default Logpresso shell password.

-

When the new password prompt appears, enter a new password and press Enter. Since the Logpresso shell account is only used for SSH access to each node, it is acceptable to set a different password for each node.

Please change the default password. New password: # Enter new password and press Enter Retype password: # Re-enter new password and press Enter Password changed successfully. Logpresso SNR-4.0.2511.1 (build 20250805) on Araqne Core 4.0.5 logpresso> # Logpresso shell promptCautionThe Logpresso shell rejects reuse of the default password. Never reuse the default password for any account. Logpresso is not responsible for any issues caused by using the default password.

Depending on the operating system, the SSH key exchange and encryption algorithm negotiation may fail when connecting to the Logpresso shell. Add the following content to the ~/.ssh/config file on control node A and B, and connect using the ssh sonar command.

Host sonar

HostName 127.0.0.1

Port 7022

User root

HostKeyAlgorithms +ssh-rsa

PubkeyAcceptedAlgorithms +ssh-rsa

KexAlgorithms +diffie-hellman-group14-sha1

Ciphers +aes256-cbc

PreferredAuthentications publickey # SSH key login

IdentityOnly yes # SSH key login

IdentityFile ~/.ssh/logpresso_rsa # SSH key login

Registering SSH Keys (Optional)

You can access the Logpresso shell using SSH key authentication. Registering SSH keys is recommended as it allows access even if the password is lost.

-

If you do not have an RSA key, run the following command to generate a key pair.

ssh-keygen -t rsa -b 2048 -f ~/.ssh/logpresso_rsaNoteThe Logpresso shell only supports ssh-rsa keys.

-

Run the following command in the Logpresso shell to register the SSH public key.

account.addSshKey root -

When the prompt appears, enter the SSH public key. The public key can be found in the

~/.ssh/logpresso_rsa.pubfile.SSH public key? ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAACAQ... # Enter the full public key root password: # Enter the current password addedTipYou can also specify the absolute path to the public key file directly: account.addSshKey root /home/logpresso/.ssh/logpresso_rsa.pub

-

Run the following command to verify the registered SSH key list.

account.sshKeys rootRunning the command displays the registered SSH keys as follows:

Authorized SSH keys --------------------- 1: ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAACAQ... -

You can now access the Logpresso shell using the SSH key without a password.

ssh -p 7022 -i ~/.ssh/logpresso_rsa root@localhost

Setting the Federation Account Password

The federation account is used for communication between all nodes in the cluster. All control/data/forwarder nodes must use the same federation account password. The federation account password set in this step is used not only for control node pair registration, but also for data node pairs and forwarder node pairs.

-

Run the following command in the Logpresso shell on both Node A and Node B to change the federation account password.

dom.resetPassword localhost root -

When the new password prompt appears, enter a new password and press Enter.

New Password: # Enter new password and press Enter- You can only enter the password once. If you enter the password incorrectly, re-run the

dom.resetPassword localhost rootcommand.

NoteThe federation account root and the Logpresso shell account root belong to different authentication domains even though they share the same name. During the federation password change process, ERROR logs may be temporarily recorded in araqne.log, but this resolves after the change is complete.

- You can only enter the password once. If you enter the password incorrectly, re-run the

-

Refer to this document to change the password expiration of the federation account to unlimited.

- Open a web browser and navigate to

https://<Control Node A IP Address>:8443, then log in with the federation account. - Navigate to System > Accounts in the left menu of the web console.

- Click root in the federation account list.

- Select Unlimited in the Password Expiration field and click the Save button.

- Perform the same operation on Node B.

- Open a web browser and navigate to

Node B Initial Setup

On Node A, the web installer automatically configures the authentication service and database connection. On Node B, these settings must be configured manually. Since Node A and B share the same MariaDB, completing this setup allows the cluster administrator to log in to the Node B web console with the same account.

sonar Authentication Service Setup

The sonar authentication service is used for web console user authentication. This setup must be completed before the cluster administrator can log in to the Node B web console.

-

Run the following command in the Logpresso shell on Node B to enable the

sonarauthentication service.logdb.useAuthService sonar dom -

Run the following command to verify that the

sonarauthentication service is enabled.logdb.authServicesIn the

External Auth Serviceslist, if bothdomandsonarhave an asterisk (*) and theorderissonar - dom, the configuration is correct.External Auth Services ------------------------ [*] dom - araqne-dom authentication provider for araqne logdb [*] sonar - com.logpresso.sonar.dom.impl.SonarAuthServiceImpl@5d7b80f7 order: sonar - dom

Creating the JDBC Profile

A JDBC profile must be created for Logpresso Sonar on Node B to connect to MariaDB.

-

Run the following command to create the JDBC profile.

logpresso.createConnectProfile jdbc sonar -

When the profile creation prompts appear, enter the values as follows:

Connection String (required)? jdbc:mariadb://localhost:3306/sonar # Database connection string User (optional)? sonar # Database account created by the web installer on Node A (default: sonar) Password (optional)? # Password for the database account created by the web installer on Node A Read Only (optional)? false # Must enter false Granted users (csv)? # Press Enter to skip Granted groups (csv)? # Press Enter to skip -

Run the following command to check the GUID of the created JDBC profile.

logpresso> logpresso.connectProfiles Connect Profiles ------------------ guid=59f4788b-75df-4297-afbd-579eed9cb086, type=jdbc, name=sonar, description=null, source=ENT -

Run the following command with the confirmed GUID to verify that the profile was created correctly.

logpresso> logpresso.connectProfile 59f4788b-75df-4297-afbd-579eed9cb086 Connect Profile ----------------- Type: jdbc Name: sonar Description: null Configs * Connection String: jdbc:mariadb://localhost:3306/sonar * User: sonar * Password: ******** * Read Only: false

Restarting the Logpresso Service

The Logpresso service must be restarted to apply the profile settings.

-

Run the following command on Node B to restart the Logpresso service.

sudo systemctl restart logpresso -

Run the following command to verify that the service is running properly.

sudo systemctl status logpressoIf the status shows

Active: active (running), the service is running normally.logpresso.service - Logpresso daemon Loaded: loaded (/usr/lib/systemd/system/logpresso.service; enabled; preset: disabled) Active: active (running) since Sat 2025-12-13 10:56:58 KST; 6 days ago Docs: https://ko.logpresso.com/documents Main PID: 1682 (java) Tasks: 229 (limit: 23116) Memory: 4.1G CPU: 4d 40min 56.229s CGroup: /system.slice/logpresso.service └─1682 /opt/logpresso/jre/bin/java ...(omitted)... -jar /opt/logpresso/sonar/araqne-core-4.0.5-package.jar

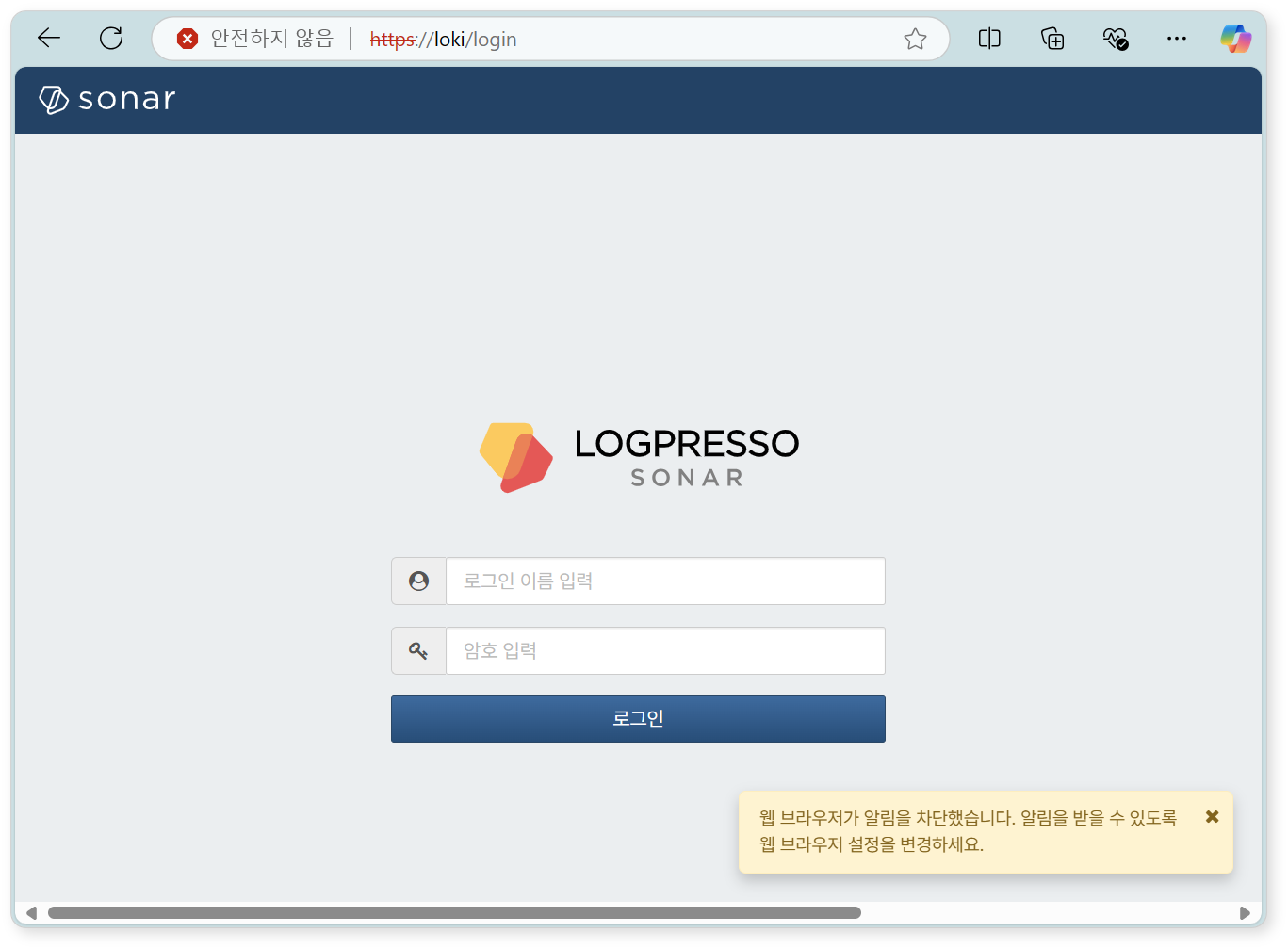

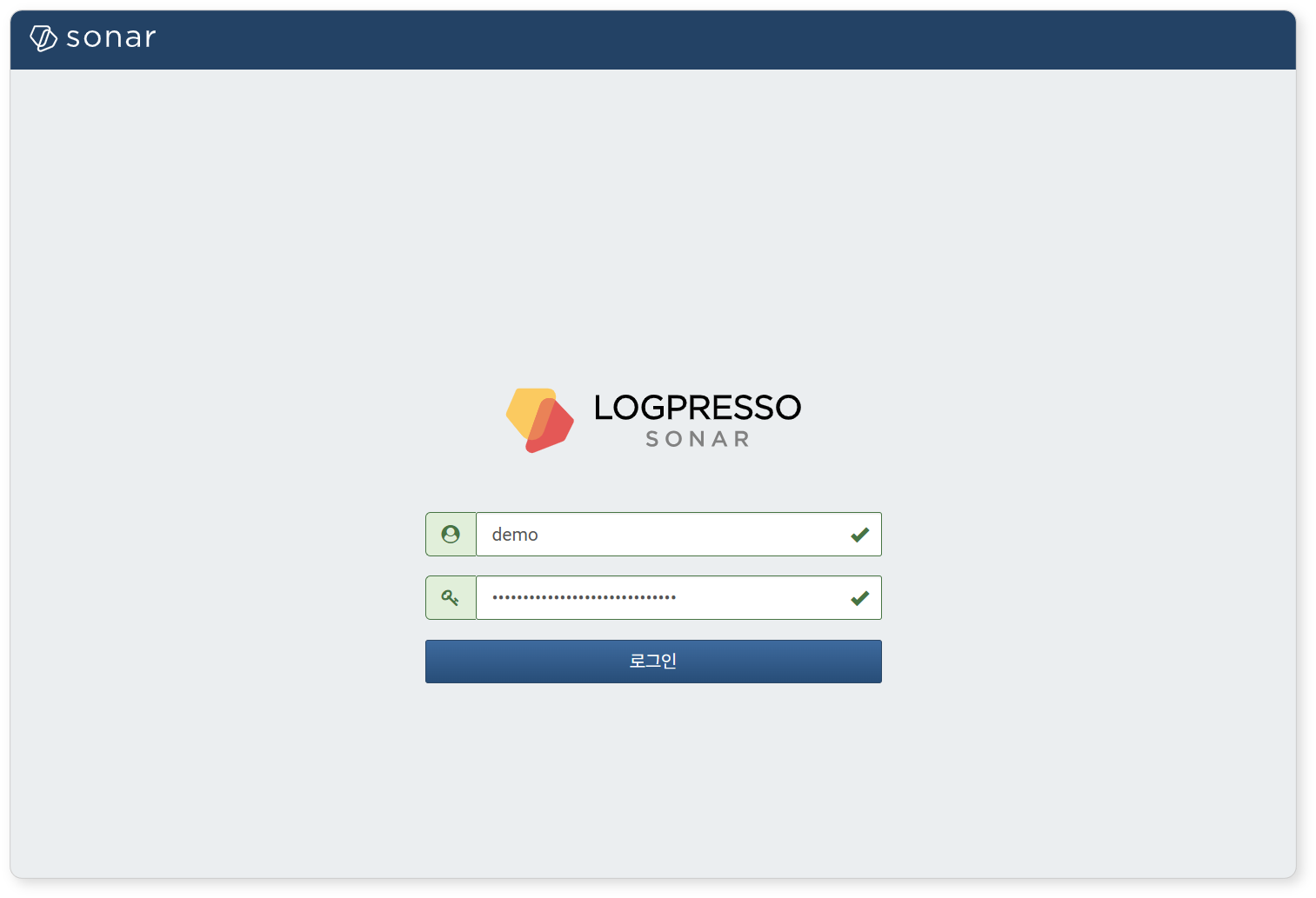

Web Console Login

Log in to the web console on both Node A and Node B. Log in with the cluster administrator account.

-

Verify that the login screen is displayed after running the web installer on Node A. If you closed the web browser window, navigate to

https://IP_ADDRorhttps://FQDN(e.g.,https://203.0.113.194,https://c1a.example.com). -

Access the web console on Node B using the same method.

-

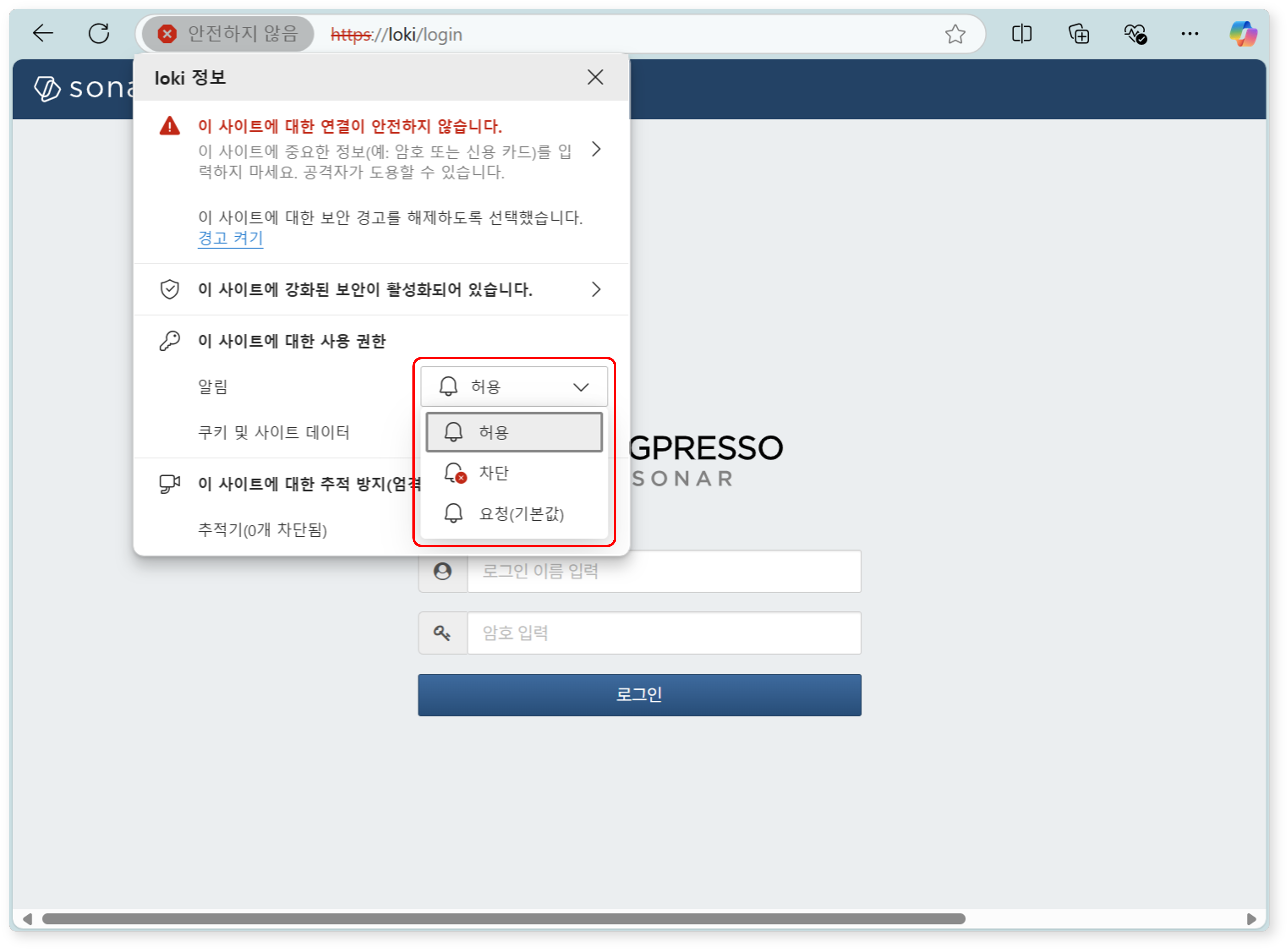

When you access the web console, a notification blocking message may appear in the lower-right corner of the screen.

-

Change the notification settings in the web browser to Allow so that server notifications can be viewed in the web console.

-

Enter the cluster administrator's login name and password, then click the Login button.

NoteIf you fail to log in multiple times, the account will be locked for a certain period. Contact the server administrator if you need to unlock the account or change the password.

License Registration

After logging in, the first thing to do is register the license.

Requesting a License

-

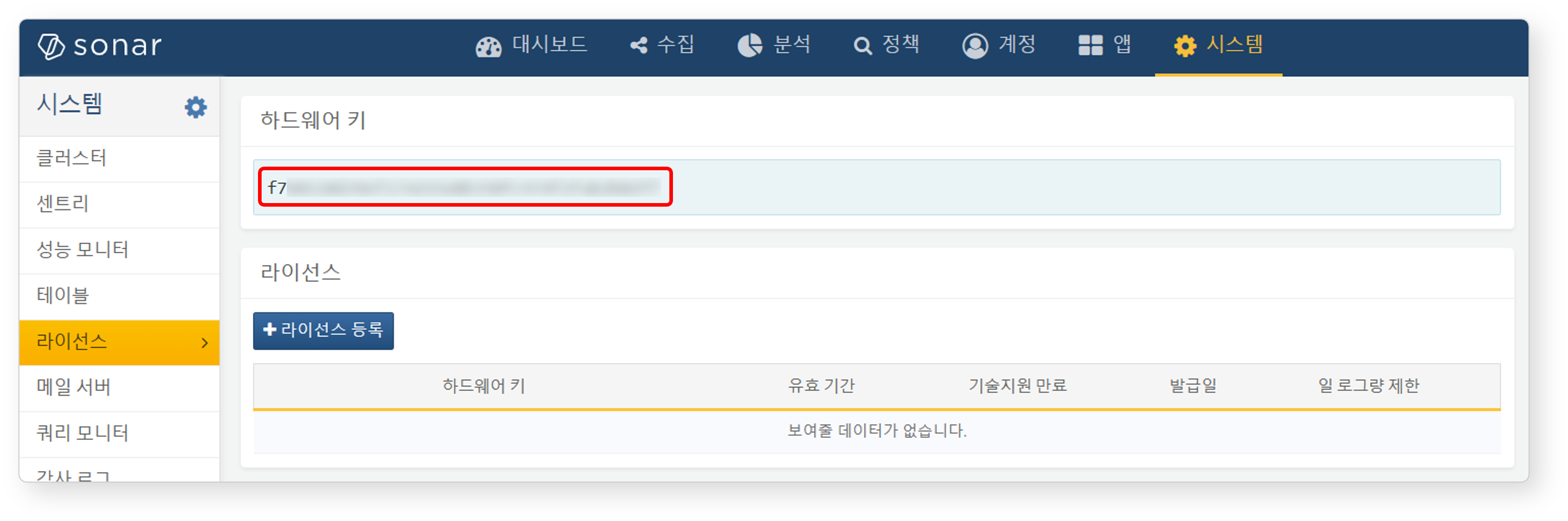

Check the Hardware Key on the System > License screen of the web console on both Node A and Node B, and record it in a safe place.

-

Request license issuance for the hardware keys of Node A and Node B on the Logpresso Support Portal.

Subsequent tasks must be performed after the license has been issued.

Entering the License

-

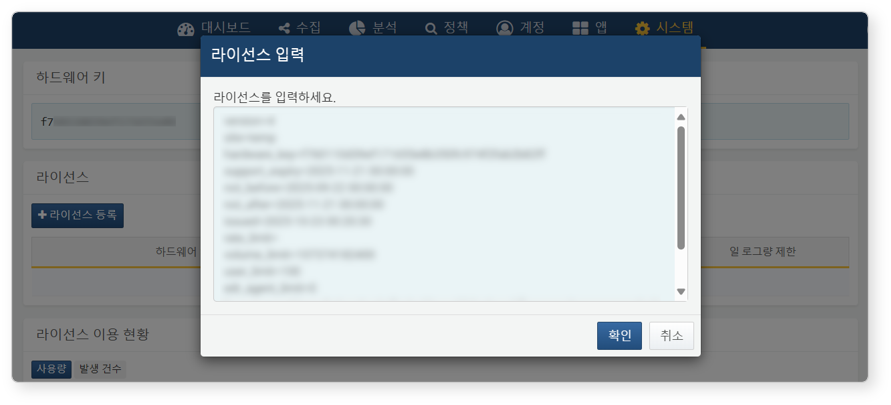

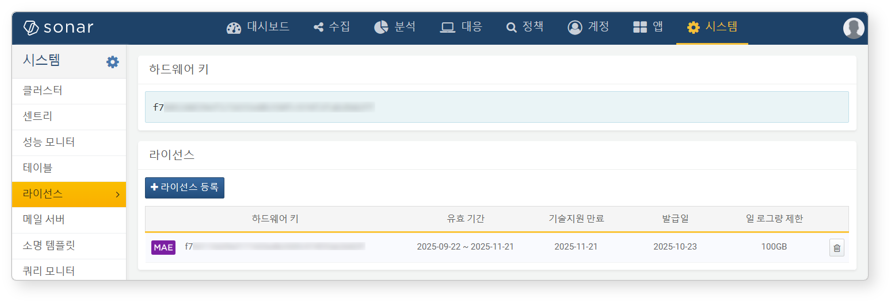

Click the Register License button on the System > License screen of the web console on both Node A and Node B.

-

Paste the issued license information into the input field and click the OK button.

-

Verify that the license has been registered successfully.

Control Node Pair Setup

Registering the Control Node Pair

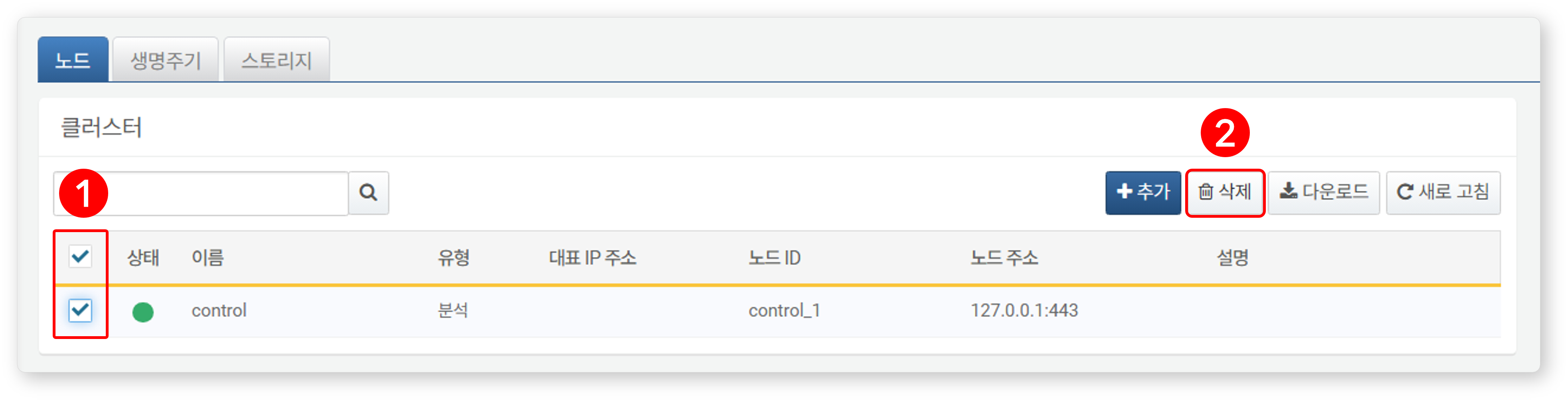

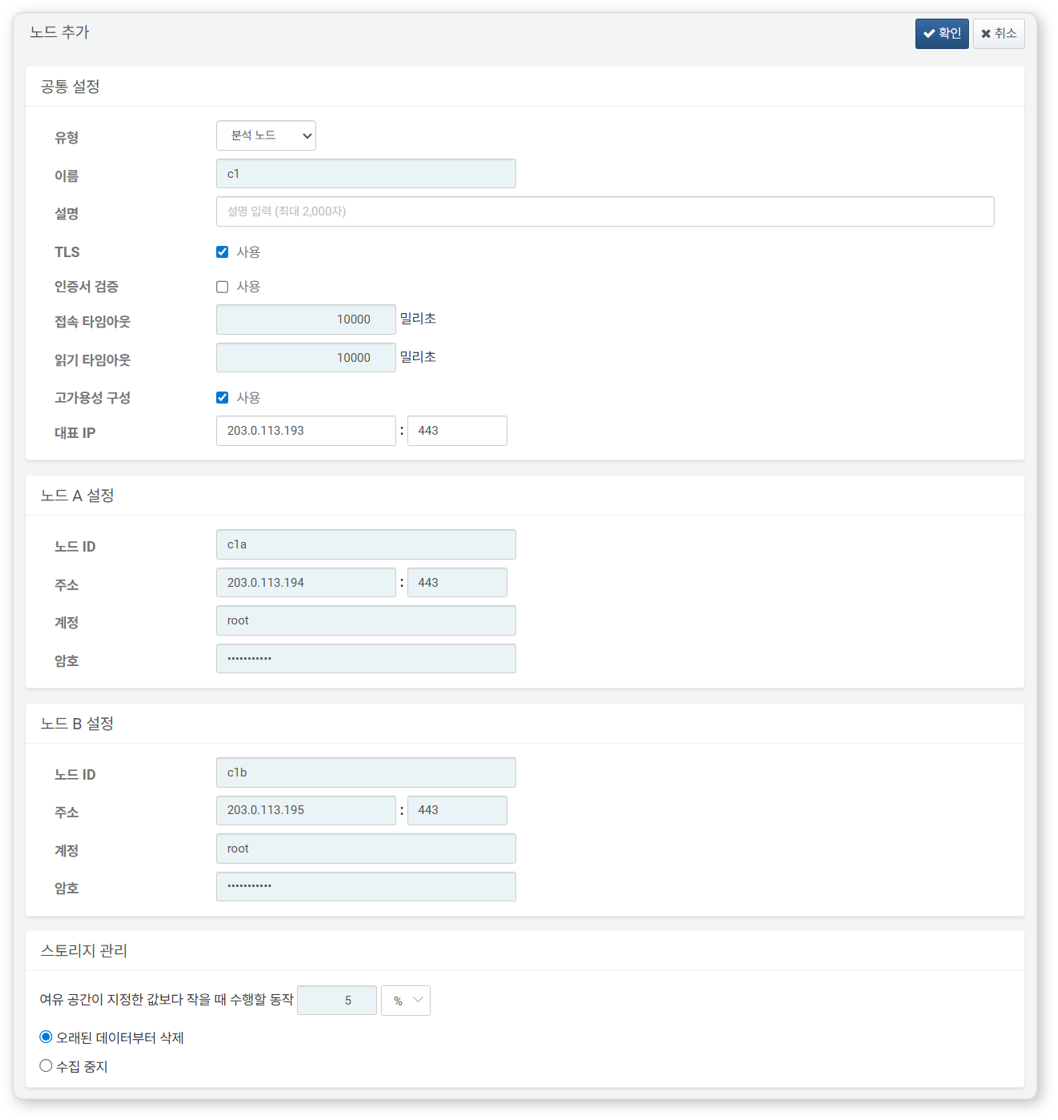

Register the node pair on the System > Cluster > Nodes screen of the Node A web console. The instructions here describe the process of deleting the existing node pair and reconfiguring it.

-

Navigate to the System > Cluster menu in the Node A web console.

-

Select the existing

controlnode and click the Delete button. -

Click the Add button in the upper-right corner of the cluster node list and configure the control node pair. For detailed descriptions of each property, refer to Adding a Node Pair in the user guide.

- Type: Select Control Node

- Name: Enter the control node pair identifier (e.g.,

d1)- This cannot be changed after clicking the OK button.

- Description: Description of the node pair (optional)

- TLS: Enabled

- Certificate Verification: Disabled

- Connection Timeout: Use default value

- Read Timeout: Use default value

- High Availability Configuration: Enabled

- Representative IP: IP address of the control node pair (e.g.,

203.0.113.193)- This IP address is used as the VIP in the HA script.

- Node A Settings

- Node ID: Enter the Node A identifier (e.g.,

c1a) - Address: Actual IP address and communication port of Node A (e.g.,

203.0.113.194:443)- The port number for the control node is

443.

- The port number for the control node is

- Account: Enter

root - Password: Enter the Sonar federation account password

- Node ID: Enter the Node A identifier (e.g.,

- Node B Settings

- Node ID: Node B identifier (e.g.,

c1b) - Address: Actual IP address and communication port of Node B (e.g.,

203.0.113.195:443) - Account: Enter

root - Password: Enter the Sonar federation account password

- Node ID: Node B identifier (e.g.,

-

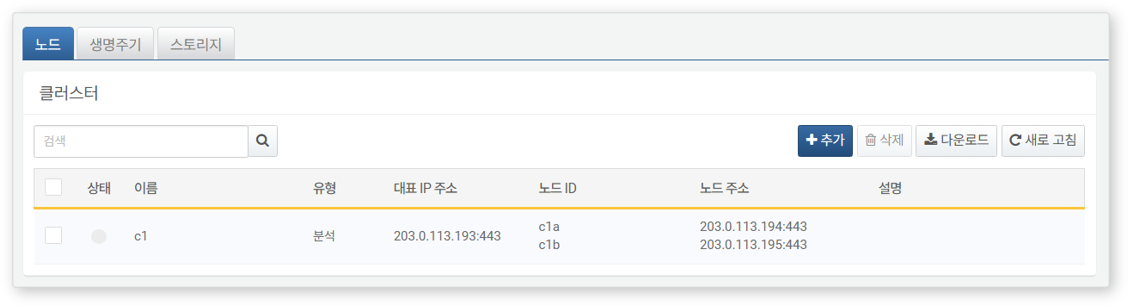

After completing the input, click the OK button. Verify that the control node pair has been added as shown below.

-

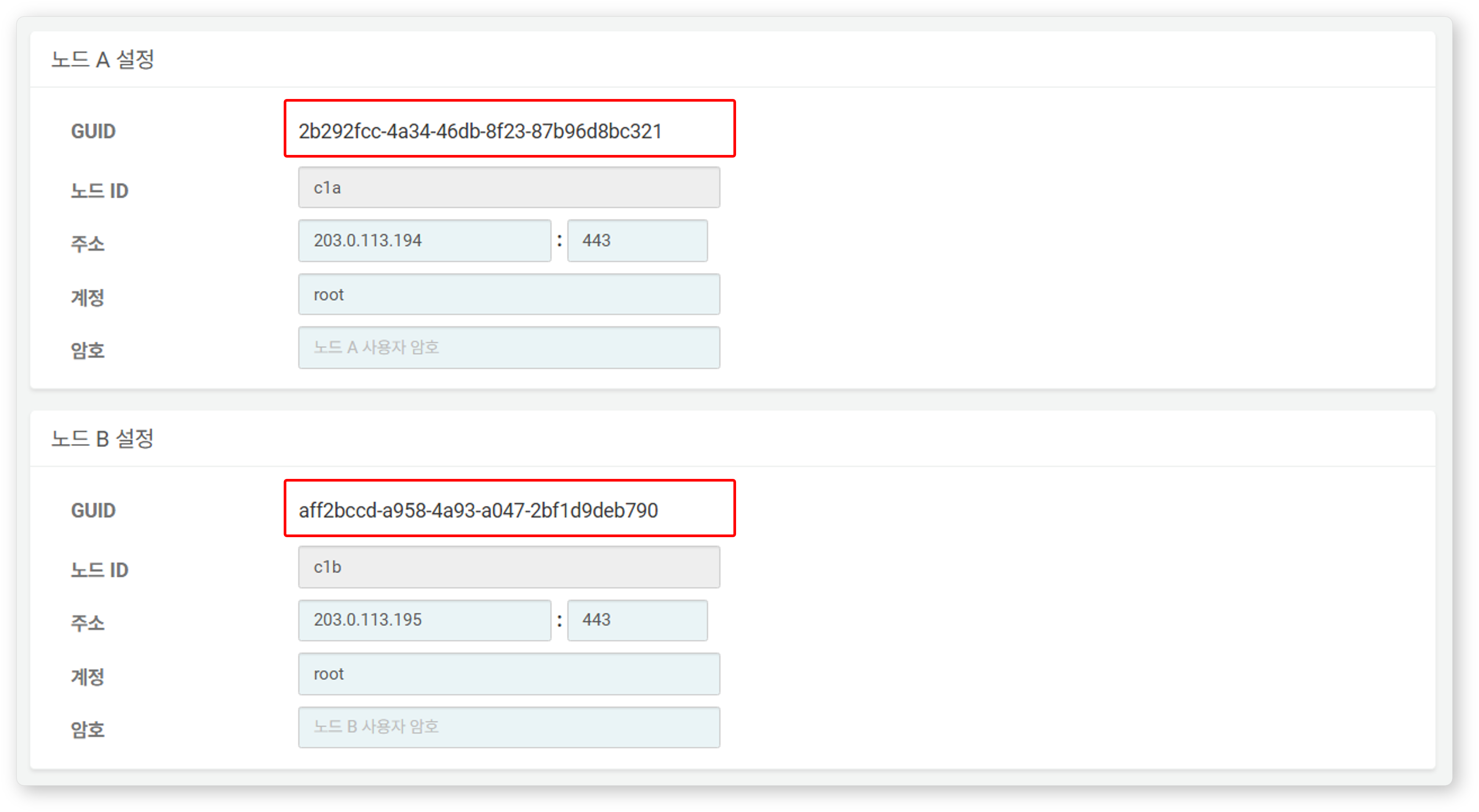

Click the Name of the added control node and check the GUIDs of Node A and Node B. If the Name or Node ID was specified incorrectly, you must delete the node pair and reconfigure it.

Record the GUIDs of Node A and Node B in a safe place. These values will be used in Node GUID Configuration.

Replicating the Key Encryption Key

Set the Key Encryption Key (KEK) from Node A identically on Node B. The key encryption key does not allow inter-node replication, so the administrator must copy it manually.

-

Run the following command in the Logpresso shell on Node A and record the encryption key string in a safe place.

sonar.cipherKey -

Copy the encryption key string from Node A, then run the following command in the Logpresso shell on Node B to configure the same encryption key.

KEY_STRINGis the encryption key string from Node A.sonar.setCipherKey KEY_STRING

Node GUID Configuration

Set the changed GUID of Node A and the newly created GUID of Node B from the Control Node Pair Registration step identically in the Logpresso shell.

-

Run the following command in the Logpresso shell on both Node A and Node B to set the policy synchronization GUID for each node in the Logpresso engine.

GUID_STRINGis the GUID string of each node.sonar.setGuid GUID_STRING # Enter the GUID corresponding to each nodeYou can check the policy synchronization GUID of each node with the following command:

sonar.nodeConfig -

Run the following command in the Logpresso shell on both Node A and Node B to set the cluster control GUID in the Logpresso engine.

sonar.setControlGuid GUID_STRING # Enter the GUID corresponding to each nodeYou can check the cluster control GUID of each node with the following command:

sonar.controlNodeGuid

Master Node Connection Settings

Configure the master node connection information for synchronizing policies and settings within the cluster. For the control node, specify itself (127.0.0.1) as the master to load policies from MariaDB.

This setting is separate from the node pair registration information configured earlier in the web console. The node pair information is used when other nodes connect to this node. The master node connection information configured here is used when this node connects to the master and is stored in the Logpresso engine's confdb. Since the control node is its own master, 127.0.0.1 is specified.

-

Run the following command in the Logpresso shell on both Node A and Node B.

sonar.setMasterThe following describes the prompts displayed after running the command and the values to enter. The account used here is the federation account.

host? 127.0.0.1 # Specify itself as master port? 443 # Enter 443 account? root # Enter root, the federation account password? # Enter the federation account password connect timeout? 10000 # Press Enter to use the default value read timeout? 10000 # Press Enter to use the default value secure? true # Enter true (default is false) skip cert check? true # Enter true (default is false)Option Description Example host Control node address 127.0.0.1 port Port for communication with the control node 443 account Federation account login name root password Federation account password connect timeout Server connection wait time 10000 (ms) read timeout Response wait time 10000 (ms) secure Whether to apply TLS communication true skip cert check Whether to skip TLS certificate validation true -

Run the following command to verify that the node settings have been applied.

sonar.nodeConfigThe following is an example of the output when the settings have been configured correctly.

guid: 7bdb297d-1d2a-400b-8efc-ce8d98580a13 host=127.0.0.1, port=443, account=root, connectTimeout=10000, readTimeout=10000, secure=true, skipCertCheck=true crypto_file_path: /opt/logpresso/data/logpresso-ca/certs/storage.pfx -

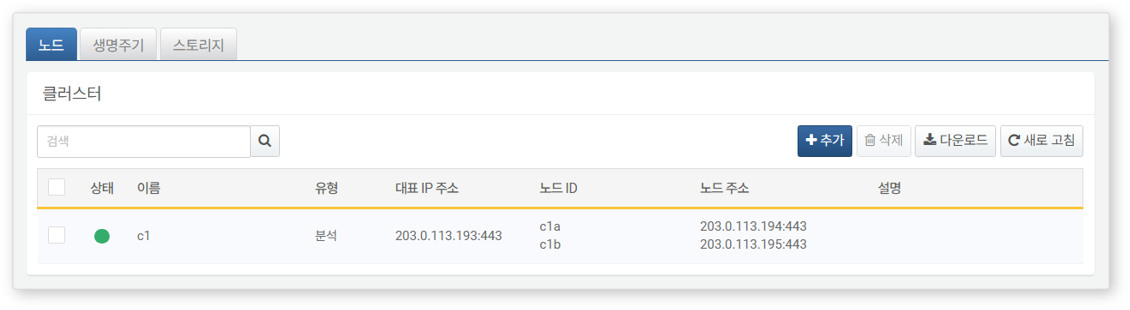

On the System > Cluster screen of the Node A web console, verify that the Status is displayed in green.

Data Replication Mode Configuration

When a node pair is configured, table data can be replicated between the two nodes. Here, "table" refers to the file-based tables where the Logpresso engine stores data, not database tables.

If identical data exists on both nodes, duplicate results may occur during distributed query execution. To prevent this, each node must be assigned a data replication mode of either ACTIVE or STANDBY.

- Active Node: Stores the original log table data and is included in distributed query searches.

- Standby Node: Receives real-time replication of log table data from the active node. Replicated tables use the same table ID as the originals and are excluded from distributed query searches, preventing duplicate results.

The logpresso.setActiveNode command designates the peer node as active, making the current node standby.

Node A Configuration (Active)

-

Run the following command in the Logpresso shell on Node A to check the name of the pair node (Node B).

logpresso.nodeStatusesThe following is an example of the output. In the

Federation Nodessection,[c1b]is the name of Node B.Local ------------------ Node GUID: ffdade37-6d96-4d13-9843-88569d64db6a Instance GUID: 93e9d502-276f-468a-9311-019e1fed8a79 Federation Nodes ------------------ [c1b] node_guid=92eab23d-83e1-4ba2-91d6-48f5408b12a6, instance_guid=1a2eb7aa-85e2-4d73-a23e-9d8d1421605e, repl_mode=null, pair_guid=null, invalid_guid=false, alive=true, paired=true, failure=false, last connect=2025-06-09 13:51:39, last alive=2025-06-10 11:18:44, created=2025-06-09 13:48:37NoteThe Node GUID and Instance GUID displayed in the logpresso.nodeStatuses command output are identifiers used by the Logpresso engine for log table replication and distributed queries. The Node GUID is used for database identification and is permanently stored in the DB_GUID file. The Instance GUID is the identifier for the currently running process and changes each time the node process starts.

-

Run the following command to designate Node B as the standby node, which makes Node A the active node. In the command example,

c1bis the name of Node B confirmed above.logpresso.setStandbyNode c1b -

Running the

logpresso.nodeStatusescommand again shows that theReplication Modein theLocalsection has been set toACTIVE.Local ------------------ Node GUID: ffdade37-6d96-4d13-9843-88569d64db6a Instance GUID: 93e9d502-276f-468a-9311-019e1fed8a79 Replication Mode: ACTIVE Pair Node: c1b Federation Nodes ------------------ [c1b] node_guid=92eab23d-83e1-4ba2-91d6-48f5408b12a6, instance_guid=1a2eb7aa-85e2-4d73-a23e-9d8d1421605e, repl_mode=null, pair_guid=null, invalid_guid=false, alive=true, paired=true, failure=false, last connect=2025-06-09 16:56:24, last alive=2025-03-27 13:35:12, created=2025-06-09 16:56:24

Node B Configuration (Standby)

-

Run the following command in the Logpresso shell on Node B to designate Node A as active, which makes Node B the standby node.

c1ais the name of Node A confirmed from thelogpresso.nodeStatusescommand output.logpresso.setActiveNode c1a -

Running the

logpresso.nodeStatusescommand shows that theReplication Modein theLocalsection has been set toSTANDBY.Local ------------------ Node GUID: ef5a8e0d-e80d-4b93-80a2-c8fec4b764ad Instance GUID: c1fb7d52-229b-464b-be06-4f7784001c17 Replication Mode: STANDBY Pair Node: c1a Federation Nodes ------------------ [c1a] node_guid=ffdade37-6d96-4d13-9843-88569d64db6a, instance_guid=93e9d502-276f-468a-9311-019e1fed8a79, repl_mode=ACTIVE, pair_guid=ef5a8e0d-e80d-4b93-80a2-c8fec4b764ad, invalid_guid=false, alive=true, paired=true, failure=false, last connect=2025-06-09 16:56:58, last alive=2025-03-27 13:37:34, created=2025-06-09 15:54:59

License Sharing Between Nodes

Configure all nodes in the cluster to be governed by the same license. The license registered on the control node is also applied to data nodes and forwarder nodes. This section describes how to propagate the license registered on the control node to other nodes.

-

Run the following command in the Logpresso shell on both Node A and Node B to set the license master. Both nodes must be set as license masters.

logpresso.setLicenseMode master -

Run the following command to check the license master status.

logpresso.licenseModeIf configured correctly, the output is as follows:

MASTER

Enabling Distributed Queries

Distributed Query is a feature that allows querying table data distributed across multiple nodes with a single query. When a query is executed on the control node, the control node distributes the necessary queries to data node pairs, where the queries are executed. The control node aggregates the results from each data node and returns the final result. Distributed query settings must be enabled on all nodes.

-

Run the following command in the Logpresso shell on both Node A and Node B to enable distributed queries.

logpresso.enablePlanner -

Run the following command to check the distributed query status.

logpresso.plannerStatusIf enabled correctly, the output is as follows:

Running: true

WebSocket Frame Configuration

If distributed query results are large or there is a large amount of data to send to the web browser, transmission may fail with the default WebSocket frame size.

-

Run the following command in the Logpresso shell on both Node A and Node B to check the current setting.

webconsole.maxFrameSizeThe default value is 8MB, and the command output is as follows:

8,388,608 bytes -

Run the following command to change the frame size. The value in the example is 84MB.

webconsole.setMaxFrameSize 83886080 -

Run the

webconsole.maxFrameSizecommand again to verify the changed value.83,886,080 bytes

Query Cache Configuration

To optimize query performance, table metadata, inverted indexes, and bloom filters are cached in memory. The role of each cache is as follows:

- Table Cache: Caches log data read from disk during queries, allowing the same data to be returned directly from memory without disk I/O on subsequent queries.

- Inverted Index Cache: Caches the list of document IDs containing specific search terms for full-text search.

- Bloom Filter 0/1 Cache: A bloom filter is a probabilistic data structure that quickly determines whether a specific search term exists in a given segment. Bloom filter 0 is used for fast determination, and bloom filter 1 is used for precise determination.

Run the following commands in the Logpresso shell on both Node A and Node B to set the cache sizes.

logpresso.tableCacheConfig max_weight CACHE_SIZE # Table cache

logpresso.indexCacheConfig inverted max_weight CACHE_SIZE # Inverted index cache

logpresso.indexCacheConfig bloomfilter0 max_weight CACHE_SIZE # Bloom filter 0 cache

logpresso.indexCacheConfig bloomfilter1 max_weight CACHE_SIZE # Bloom filter 1 cache

Enter the CACHE_SIZE values from the following table based on daily processing volume and memory capacity. The input unit for cache values is bytes.

| Daily Volume | RAM | HEAP | DM | max_weight | inverted | bloomfilter0 | bloomfilter1 |

|---|---|---|---|---|---|---|---|

| 10GB/day | 32GB | 8GB | 9GB | 643,825,664 | 6,442,450,944 | 1,073,741,824 | 213,909,504 |

| 50GB/day | 64GB | 16GB | 26GB | 1,073,741,824 | 19,327,352,832 | 4,294,967,296 | 613,416,960 |

| 100GB/day+ | 128GB | 34GB | 68GB | 3,221,225,472 | 46,170,898,432 | 10,737,418,240 | 1,073,741,824 |

- max_weight: Table cache

- inverted: Inverted index cache

- bloomfilter0: Bloom filter 0 cache

- bloomfilter1: Bloom filter 1 cache

- Do not use commas (

,) when entering values. Commas are shown in the table only for readability.

The cache values converted to GB are as follows:

| Daily Volume | RAM | HEAP | DM | max_weight | inverted | bloomfilter0 | bloomfilter1 |

|---|---|---|---|---|---|---|---|

| 10GB/day | 32GB | 8GB | 9GB | 614MB | 6GB | 1GB | 204MB |

| 50GB/day | 64GB | 16GB | 26GB | 1GB | 18GB | 4GB | 585MB |

| 100GB/day+ | 128GB | 34GB | 68GB | 3GB | 43GB | 10GB | 1GB |

Service Restart

The Logpresso service must be restarted to apply the changed settings. The order of operations is as follows:

- Stop order: Standby -> Active

- Start order: Active -> Standby

By default, Node A is active and Node B is standby. Starting the active node first during system operation allows the original data service to recover quickly, and the standby node can establish a replication connection immediately upon startup. If this order is not followed in an environment where a virtual IP address (VIP) is configured (described later), unnecessary VIP failovers may occur.

To stop the nodes:

-

Run the following command on the standby node (Node B) to stop the Logpresso service.

sudo systemctl stop logpresso -

After Node B has stopped, run the same command on the active node (Node A) to stop the service.

To start the nodes:

-

Run the following command on Node A to start the Logpresso service.

sudo systemctl start logpresso -

After Node A has started successfully, run the same command on Node B to start the Logpresso service.

-

Log in to the Node A web console and navigate to System > Performance Monitor to verify that each control node's status is displayed in green.

Network Redundancy

The control node pair shares a single virtual IP address (VIP) as a representative address, distributing load at the network level and automatically switching connections during failures. External clients such as data nodes, forwarder nodes, and web browsers access the control node through the VIP, maintaining service continuity regardless of individual node failures.

Network redundancy can be configured in the following two ways:

Load Balancer (Recommended)

Configure a load balancer such as an L4 switch to distribute traffic arriving at the VIP to control node A and control node B. Consider the following when configuring the load balancer:

- Health Check: Configure periodic health checks on port 443 (TCP) of each control node to verify node availability.

- Failover: Configure nodes that fail health checks to be automatically excluded from traffic distribution, and route traffic only to healthy nodes.

HA Script

In environments where a load balancer is not available, you can configure VIP failover using the HA script provided by Logpresso. Request the HA script from the Logpresso technical support team.

Accessing the Web Console via the Representative IP Address

Once the configuration is complete, you can access the web console from a web browser using the representative IP address (e.g., https://203.0.113.193).